We are currently performing a large cross-account migration which involves migrating a rather large Aurora cluster. To do this smoothly I wanted to replicate to the new cluster in the new account from the existing cluster in the old account.

AWS does have official documentation on this here, but I found I was able to do this without having to dump and re-import the database, which is nice with a 600+ gig database.

Here are the steps I took to do this. I would still recommend reading the official documentation from AWS on this topic.

Enable Binary Logging / Set Retention

Binary logging will need to be enabled on the existing cluster. This is done in the Aurora cluster’s “DB cluster parameter group” by updating the “binlog_format” parameter. I set this to “Mixed” and that is recommend format unless you have a specific need for another format.

After you make this change you will need to reboot your cluster for it to take effect.

You can check to see if this has taken effect by connecting to the “Writer” in the cluster and running show global variables like 'binlog_format'.

Expected output:

MySQL [(none)]> show global variables like 'binlog_format';

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| binlog_format | MIXED |

+---------------+-------+

1 row in set (0.00 sec)

Now that binary logging in enabled we can set the retention time for the binary logs with the following command:

CALL mysql.rds_set_configuration('binlog retention hours', 144);

This will retain the binary logs for 144 hours (6 days). You can modify this as needed up to a max of 2160 hours (90 days). 144 hours should be more then enough for our needs.

Create Snapshot

After binary logging is enabled and retention is set we can take a snapshot of our existing cluster. Make sure to take a snapshot of the instance that is the “Writer”. (Note: Make sure followed the steps above to enable binary logging before creating the snapshot).

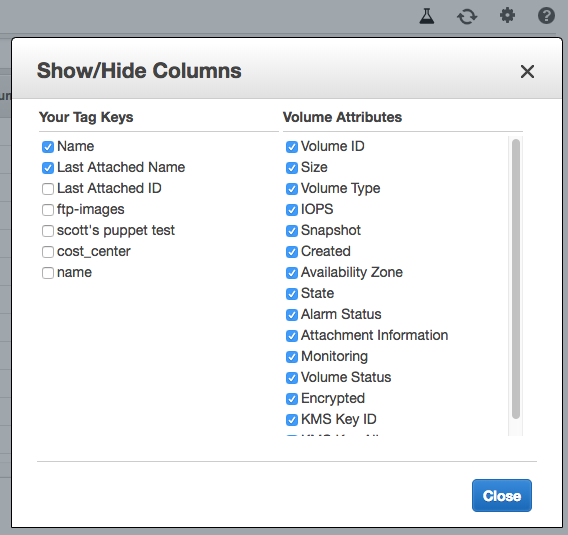

You can now share the snapshot with the new account. You can see specific instructions on how to do that here.

Once the snapshot is shared with the new account you can now restore the snapshot to a new Aurora cluster.

Restore Snapshot / Create New Cluster

Important Note: You must make sure that binary logging is also enabled the DB cluster parameter group for the new cluster you are creating. This will allow us to get the snapshot’s position in the binary log.

Depending on the size of the snapshot it may take some time for the instance to restore. Once the new cluster is up and running you will want to check the “Recent Events” for an event like the following:

Binlog position from crash recovery is mysql-bin-changelog.000002 85340883

Take note of this as we will use it to start up replication.

Create Replication User / Check Access

A user must be created for the new cluster to use to replicate from the original cluster. Creating a MySQL user is outside the scope of this post, but AWS has examples in the official docs here.

The important thing is that the user has the “REPLICATION CLIENT, REPLICATION SLAVE” global privileges.

You will also want to make sure that your new Aurora cluster can reach the existing cluster. You may have to modify security group access for this. We peered our VPCs to make the migration easier.

Start Replication

We are now ready to start up replication! Since this is Aurora setting up and enabling replication is a little different then vanilla MySQL/MariaDB. We use a couple stored procedures to do this.

Use the binlog file and position we got from the “Recent Events” to set master information.

Example:

CALL mysql.rds_set_external_master ('existing-cluster-old-account.cluster-ro-dasodjfja.us-east-1.rds.amazonaws.com', 3306, '<repl_user>', '<password>', 'mysql-bin-changelog.000002', 85340883, 0);

Now that is set we are ready to start replication:

CALL mysql.rds_start_replication;

You can then check the status of the replication with:

MySQL [(none)]> show slave status\G;

Hopefully everything should now be replicating. As mentioned before if you have any issues I would recommend taking a look at the AWS documentation on this topic.